Preamble

This article is fiction. None of the events described have occurred. The people are invented. The scenarios are extrapolated from the legal text of Regulation (EU) 2024/1689 and from publicly available information about the EU AI Office's mandate, staffing, and responsibilities.

Everything that follows is, however, logically inevitable. The regulation's own architecture produces the loops described below. Whether they manifest on a single dramatic Monday morning or across years of slow institutional discovery is a question of timing, not of substance.

08:00 — The Brief

The new director of the European AI Office arrives at Avenue de Beaulieu 25 in Brussels on a Monday morning in September 2026. She has been in the role for three weeks. Before this, she led the digital transformation unit of a mid-sized Member State's ministry of justice. She is competent, methodical, and under no illusions about the scale of her task.

On her desk is a briefing document. It runs to forty-seven pages. The summary fits on one.

The AI Office has 130 identified responsibilities under the AI Act. These break down into four categories:

- 39 governance tasks — establishing the AI Board secretariat, appointing the scientific panel, setting up regulatory sandboxes, managing the EU database of high-risk systems, coordinating with 27 national market surveillance authorities

- 39 pieces of secondary legislation — delegated acts, implementing acts, guidelines, templates, codes of practice, standardisation requests, each with its own consultation process, stakeholder engagement, and legal review

- 34 enforcement categories — monitoring GPAI providers, assessing conformity derogations, coordinating joint investigations, receiving serious incident reports, evaluating codes of practice adequacy

- 18 evaluation tasks — reviewing the Act's effectiveness, assessing penalty harmonisation, reporting to Parliament, evaluating whether the list of prohibited practices needs updating

The AI Office has approximately 140 staff members. The director does the arithmetic. That is slightly more than one person per responsibility, before accounting for leave, illness, administrative overhead, and the fact that many of these responsibilities are not discrete tasks but continuous obligations requiring ongoing attention across years.

She turns to the second page of the summary. It contains a recommendation from the transition team: deploy AI systems to assist with document analysis, monitoring, stakeholder communication, and evaluation. Standard digital government practice. The kind of recommendation that appears in every public sector modernisation report published since 2023.

The director agrees. There is no alternative. 140 people cannot manually execute 130 ongoing responsibilities across 27 Member States without technological assistance. She authorises the procurement process.

She does not yet realise what she has set in motion.

09:00 — The Procurement

The procurement team — four people, two of whom started last month — begins scoping the AI tools the office needs.

Document analysis — reviewing GPAI provider documentation (Table C, items 7-8), conformity assessment derogations (Table C, item 1), and annual reports from 27 Member States. Dozens of formats, languages, levels of completeness. An AI system would extract compliance indicators, flag inconsistencies, route documents to the right reviewer.

Monitoring — tracking GPAI provider obligations (Table C, items 8-14), codes of practice implementation (Table C, item 13), and maintaining the published list of systemic-risk models (Table C, item 6). A monitoring system would aggregate data feeds from 27 national authorities, flag anomalies, alert staff to non-compliance patterns.

Stakeholder communication — managing the advisory forum, the scientific panel, the AI Board, public consultations for all 17 delegated and implementing acts, and a single information platform for all operators. Across 27 Member States. In 24 official languages.

Evaluation — assessing the AI Act's effectiveness across 27 penalty regimes, sandbox outcomes, and enforcement actions, then reporting to Parliament (Table D, all 18 items).

The team drafts specifications. Standard public sector procurement. The team lead signs the scoping document and sends it to the compliance officer.

This is where the day begins to bend.

10:30 — The Classification

The compliance officer is a lawyer who transferred from DG JUST. She has spent two years reading the AI Act. She opens the procurement specifications and begins a routine assessment.

Each of the proposed tools is an AI system within the meaning of Article 3(1). The AI Office will deploy them. The AI Office's staff will operate them. This makes the AI Office a deployer under Article 3(4).

Article 4 applies. The AI Office must ensure, to its best extent, a sufficient level of AI literacy of its staff dealing with the operation and use of these AI systems.

She notes this. Standard obligation. Every deployer in Europe faces the same requirement. She moves on to the classification analysis.

The document analysis system. It reviews technical documentation from GPAI providers and flags whether they meet compliance thresholds. It evaluates outcomes. She checks Annex III. Area 3(b): AI systems intended to evaluate learning outcomes. No — that is education. But Area 8(a): AI systems intended to assist public authorities in evaluating the reliability of evidence. Closer. She consults the guidelines the office itself published under Table B, item 18. The examples are not quite on point. She makes a note: the system that helps the AI Office assess compliance may itself require a compliance assessment.

The monitoring system. It aggregates data about organisations and flags patterns of non-compliance. It profiles organisations based on their behaviour, documentation quality, and incident history. She checks Article 6(3): any system that performs profiling of natural persons is always high-risk, regardless of derogations. The monitoring system profiles organisations, not natural persons. But those organisations are represented by natural persons — the named contacts, the responsible officers, the signatories of compliance declarations. If the system associates compliance risk scores with identifiable individuals — and how could it not, given that the AI Act assigns personal responsibility to authorised representatives — then it profiles natural persons.

She sets down her pen. The monitoring system that the AI Office would use to track non-compliant deployers may itself be a high-risk AI system. Chapter III obligations apply: conformity assessment, human oversight, logging, fundamental rights impact assessment, and — critically — Article 4 literacy for the staff operating it.

The evaluation system. This is the one that makes her pause longest. The AI Office must evaluate the AI Act itself — whether its provisions are effective, whether its classification system captures the right risks, whether its penalties are proportionate. Table D, item 14 requires this evaluation by August 2029. If the office deploys an AI system to analyse enforcement data and generate evaluation reports, that system is making assessments about the regulatory framework that governs AI systems. Including itself.

She writes a single line in her assessment: "The evaluation tool is subject to the regulation it is evaluating."

12:00 — The Training Problem

The compliance officer's preliminary assessment reaches the HR and training lead. He reads it and understands the immediate implication: before the AI Office can deploy any of these tools, it must ensure AI literacy for all 140 staff members. Not generic AI awareness — Article 4 requires literacy calibrated to "technical knowledge, experience, education and training, the context in which the AI systems are to be used."

The AI Office employs lawyers, engineers, policy analysts, economists, communication specialists, and administrative staff. Their existing AI knowledge ranges from doctoral-level machine learning expertise to competent-but-non-technical policy work. A single training programme will not satisfy Article 4's differentiation requirement. The Commission's own Q&A from May 2025 confirmed that one-size-fits-all approaches are "generally not considered sufficient."

He needs an adaptive training platform. One that assesses each staff member's baseline knowledge, delivers personalised learning pathways, tests comprehension through scenario-based exercises relevant to each person's actual role, and updates continuously as the tools evolve.

The most effective system for this is an AI-powered adaptive learning platform.

He begins to write the specification. Then he stops.

An AI training platform that evaluates learning outcomes falls under Annex III, Area 3(b). High-risk. An AI training platform that assesses the appropriate education level for each individual falls under Area 3(c). Also high-risk. An AI training platform that monitors whether staff are engaging with the material and flags those falling behind — Area 3(d) if it detects prohibited behaviour, Area 4(b) if framed as performance monitoring in the employment context.

And if the platform uses any biometric signal to detect whether a learner is confused, frustrated, or disengaged — the single most useful feature of adaptive learning — it is not merely high-risk. It is prohibited under Article 5(1)(f). Emotion recognition in education and workplace contexts. Banned outright. From 2 February 2025. Sixteen months ago.

He reads Article 5(1)(f) again. Then he reads it a third time.

The office that enforces the prohibition on emotion recognition in workplace training cannot use emotion recognition in its own workplace training.

This is, he acknowledges, perfectly consistent. The regulation does not exempt its own enforcers. It should not. If the prohibition is justified — and the legislative rationale in Recital 44 is clear about why it is — then it applies to the European Commission's staff exactly as it applies to everyone else.

But the consequence is that the AI Office must build AI literacy for 140 people, across diverse technical backgrounds, for multiple complex AI systems, using training methods that are categorically less effective than the AI-powered methods available to any private sector organisation willing to accept the compliance burden. And the AI Office cannot accept the compliance burden because the high-risk classification of its own training platform would generate obligations that its 140 staff — the same 140 staff who need the training — would need to manage.

The training is for the tools. The tools include the training platform. The training platform requires training on the training platform.

He draws a circle on his notepad and stares at it.

14:00 — The Infinite Loop

The compliance officer, the HR lead, the procurement team leader, and two senior policy analysts gather in Conference Room 4.12. Someone has brought a whiteboard.

The compliance officer presents her classification analysis. The HR lead explains the training problem. The procurement lead notes that the timeline for the monitoring system was supposed to be eight weeks.

A junior analyst — she joined from a Member State's digital agency two months ago — picks up a marker and begins drawing on the whiteboard.

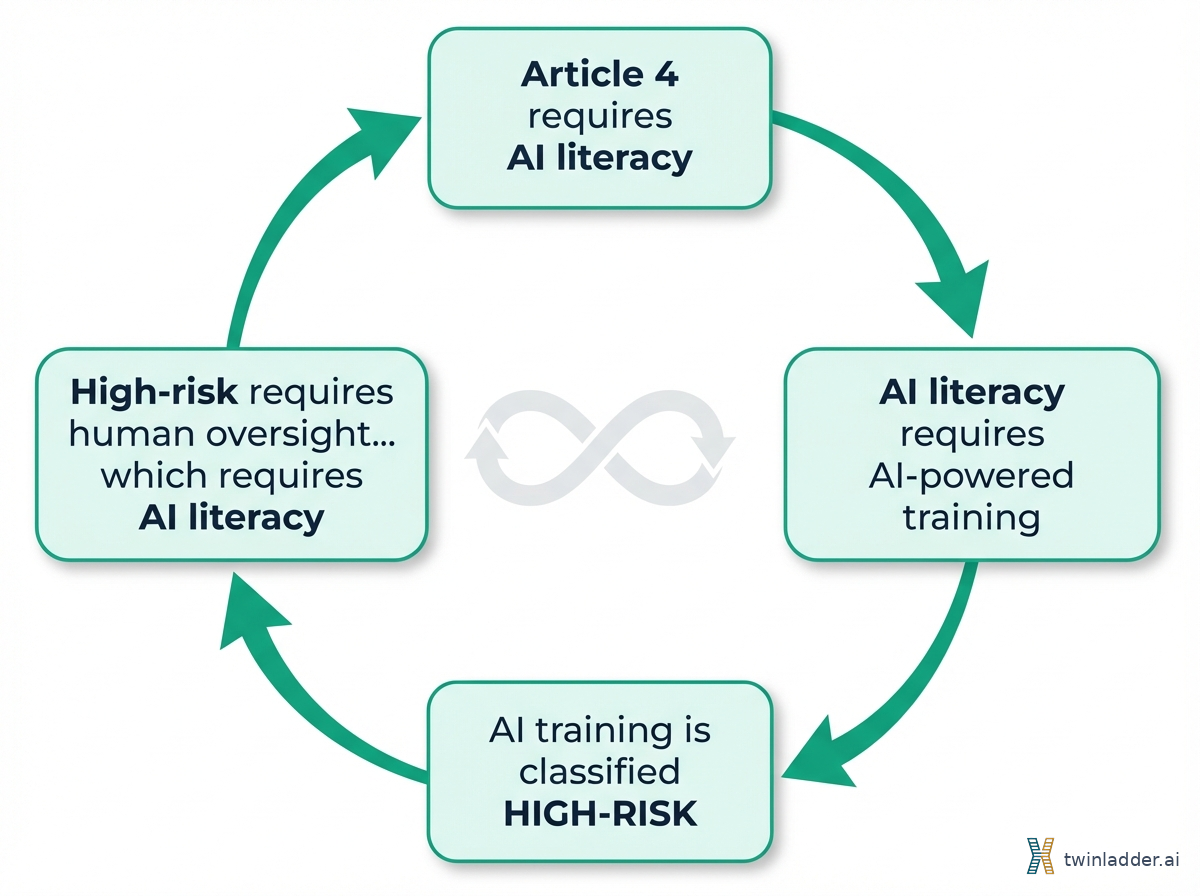

She draws a box: Article 4 requires literacy.

An arrow: literacy requires training.

Another box: Training AI is high-risk.

An arrow: high-risk requires Chapter III compliance.

Another box: Chapter III requires human oversight.

An arrow: human oversight requires literacy.

Another box: Literacy requires training.

She draws an arrow back to the first box.

The room is quiet for eleven seconds. Someone later tells me it felt much longer.

"This is the regulation we wrote," says the compliance officer.

"Drafted," corrects the senior policy analyst. "The co-legislators wrote it. We implement it."

"The distinction," says the junior analyst, still holding the marker, "does not change the geometry."

She is right. It does not.

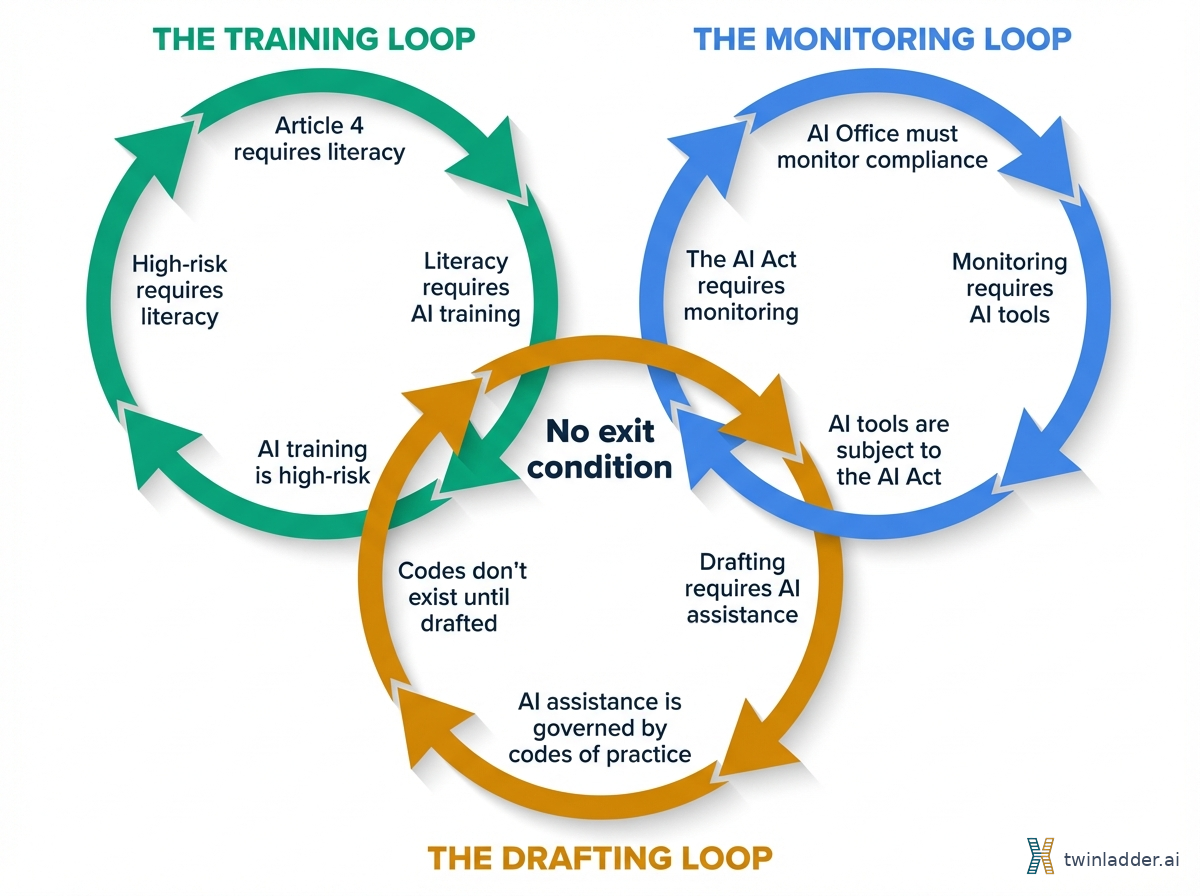

The loop is not a bug in the implementation. It is a structural property of the legal architecture. Article 4 applies to all AI systems without exception. Annex III classifies education and training AI as high-risk without exemption for compliance-purpose deployments. Article 5 prohibits emotion recognition in workplace training without exemption for the training needed to comply with the regulation that contains the prohibition. Article 6(3)'s derogations are overridden by the profiling clause: any system that profiles natural persons remains high-risk regardless.

Every exit from the loop leads back into it.

The junior analyst adds a second loop to the whiteboard. The monitoring system that tracks whether deployers comply with the AI Act is itself subject to the AI Act. The AI Office must monitor its own monitoring system. It must ensure compliance of its own compliance tools. It must oversee its own oversight mechanisms.

She adds a third loop. The codes of practice that the AI Office must develop (Table B, items 30-31) will be drafted with AI assistance — because 140 people cannot produce 39 pieces of secondary legislation without it. The AI systems used to draft codes of practice about AI systems are themselves governed by those codes of practice. The drafting tool must comply with the code it is helping to draft, but the code does not exist until the tool finishes drafting it.

Three loops on a whiteboard. Three instances of the same structural pattern. The regulation assumes a separation between the regulator and the regulated, between the compliance tool and the compliance obligation, between the training system and the training requirement. In a world where AI is pervasive, that separation does not hold.

"How many of our 130 responsibilities," asks the director, who has been listening from the doorway, "can we fulfil without using AI?"

The room does another calculation. The consensus lands somewhere around forty. The straightforward administrative tasks — publishing lists, maintaining databases, receiving notifications. Everything that requires analysis, monitoring, evaluation, or communication at scale across 27 Member States requires technological assistance that, in 2026, means AI.

Ninety of 130 responsibilities effectively require AI tools. Each AI tool triggers Article 4. Article 4 compliance at the scale and specificity the regulation demands requires AI. Which triggers Article 4.

"We are," says the senior policy analyst, "the deployer the regulation was written for. And we cannot deploy."

15:30 — The Evaluation

The afternoon session turns to Table D. The evaluation tasks.

Table D, item 14 is the centrepiece: by August 2029, the AI Office must assess "the need for amendment of this Regulation" — examining the oversight system it operates, the conformity assessment bodies it supervises, and the resources of national authorities it coordinates with. Twenty-seven Member States. Hundreds of conformity bodies. Thousands of high-risk deployments. Three years of enforcement data.

To process this, the office will deploy an evaluation system. The junior analyst adds a fourth loop to the whiteboard.

The evaluation system analyses the AI Act's effectiveness. The AI Act governs the evaluation system. If it finds the classification framework too broad — that it captures compliance tools that should not be high-risk — the finding undermines the regulatory basis for the evaluation system's own classification. If it finds the framework appropriate, it validates constraints on its own design. The system's operational parameters are determined by the framework it is evaluating. It is like asking a fish to assess the properties of water.

"We could use a non-AI evaluation methodology," suggests someone.

"For 27 Member States, 34 enforcement categories, and three years of data?" replies the economist. "With what staff?"

Table D, item 6 deepens the loop. By August 2028, the office must assess whether the high-risk classification list in Annex III needs amending — including Area 3, the classification that makes the office's own training tools high-risk. The evaluation's outcome determines the regulatory status of the evaluation's own instruments.

The junior analyst runs out of whiteboard space. She photographs what she has drawn. Five interlocking loops. She captions the image: "The architecture of the regulation we enforce."

17:00 — The Memo

The director closes the door to her office and opens a blank document. Subject line: "Structural observation regarding Article 4 implementation in the context of AI Office operations."

She writes:

The AI Office has identified a recursive dependency in the AI Act's obligation structure that manifests when the implementing body itself deploys AI systems to fulfil its mandate.

Article 4 requires AI literacy for all deployers. Achieving meaningful literacy at the specificity required by the regulation's own Q&A guidance necessitates AI-powered adaptive training. AI-powered training is classified as high-risk under Annex III, Area 3. High-risk classification triggers Chapter III obligations, including human oversight, which in turn requires the AI literacy that the training was intended to provide.

This recursive structure is not unique to the AI Office. It applies to every organisation in Europe whose AI compliance obligations are large enough to require AI-assisted management. The AI Office encounters it first because its obligation set is the largest in the regulatory ecosystem: 130 responsibilities across governance, legislation, enforcement, and evaluation.

The observation does not indicate a failure of intent. The regulation's objective — that people who use AI must understand it — is correct and necessary. The observation indicates a structural gap between the regulation's architecture and the operational reality of AI deployment at institutional scale.

She considers what to propose. Three changes have been circulating in the policy literature. She has read them in different forms from different sources. They converge because the logic converges.

Three structural amendments would resolve the recursive dependency while preserving and strengthening the regulation's objectives:

First: establish a "learning deployment" category. AI systems deployed primarily for competence development, training, and compliance purposes should carry proportionate obligations lighter than those for AI systems deployed in decision-making or automation contexts. The distinction is functional, not technological. A training system that builds human capability has a categorically different risk profile from a screening system that replaces human judgment. The regulation should recognise this.

Second: mandate continuous compliance rather than point-in-time assessment. AI literacy against a moving technological frontier cannot be ensured at a moment in time. The regulation should assess whether organisations maintain active, evolving competence mechanisms — not whether they conducted training on a particular date. This would allow compliance tools to evolve alongside the technology they govern, without each update triggering a new compliance cycle.

Third: measure competence outcomes, not compliance inputs. If the regulatory objective is that people understand AI, the assessment should be whether they demonstrably do — not whether the organisation purchased a training programme. An outcome-based model dissolves the recursive dependency entirely: it does not matter whether the organisation used AI or chalk and a blackboard to build literacy. What matters is whether the people can demonstrate the competence. The method becomes irrelevant. The loop disappears.

She reads it back. It is, she realises, the same set of proposals she encountered last week in a research paper from a consultancy in Riga. She had flagged it as thoughtful but academic. Now she sees it differently. The logic is the same whether you are a 50-person law firm or the European AI Office, because the structural problem is the same.

She saves the memo. It will enter the institutional process. It may surface in the 2029 evaluation. It may not.

The whiteboard in Conference Room 4.12 will not be erased for three weeks. Staff from other units will come to look at it. Some will add their own loops.

The Coda

This thought experiment is not about the EU AI Office specifically. Any institution could be substituted. A national market surveillance authority trying to monitor AI deployments across its jurisdiction. A large hospital system deploying AI diagnostics while training its clinicians on AI literacy. A multinational bank using AI to comply with AI regulations across twelve Member State implementations. The recursive structure is embedded in the regulation, not in any particular deployer.

The AI Act is the most ambitious technology regulation ever attempted. Its scope, its specificity, and its willingness to prohibit rather than merely constrain distinguish it from every predecessor. Article 4's literacy obligation is, in my view, its most important provision — more consequential than the prohibited practices in Article 5, more far-reaching than the high-risk requirements in Chapter III. If people do not understand AI, nothing else in the regulation works.

But the most important regulation of the decade contains a design flaw that its own implementing body cannot resolve using the tools available to it. The regulation demands AI literacy. The most effective path to AI literacy runs through AI. The regulation classifies that path as high-risk. The high-risk obligations require literacy. The loop has no exit condition.

This is not a criticism. It is a diagnosis. And like all diagnoses, it is the first step toward treatment.

The treatment is not complicated. A learning deployment category. Continuous compliance. Outcome-based measurement. Three amendments that preserve the regulation's intent while dissolving the recursive structure that undermines it.

The question is whether the institution responsible for the regulation can recognise the recursion while standing inside it. On the evidence of this thought experiment, the answer is: yes, but only on the day they try to use AI themselves. Only when the abstraction of "deployer obligations" becomes the concrete reality of 140 people, 130 responsibilities, and a whiteboard full of loops that lead nowhere.

That day is coming. The arithmetic guarantees it.

Sources

-

Kai Zenner, "Responsibilities of European Commission (AI Office)" — Comprehensive enumeration of 130 AI Office responsibilities across four tables: governance (39), secondary legislation (39), enforcement (34), evaluation (18). Published 22 August 2024. https://artificialintelligenceact.eu/responsibilities-of-european-commission-ai-office/

-

Regulation (EU) 2024/1689, Article 4 — AI Literacy obligation. Single-sentence provision requiring providers and deployers to ensure sufficient AI literacy of staff, applicable from 2 February 2025 with no size or sector exemption. https://artificialintelligenceact.eu/article/4/

-

Regulation (EU) 2024/1689, Annex III, Area 3 — High-risk classification for AI in education and vocational training: determining admission, evaluating learning outcomes, assessing appropriate education level, monitoring behaviour during tests. https://artificialintelligenceact.eu/annex/3/

-

Regulation (EU) 2024/1689, Article 5(1)(f) — Prohibition of emotion recognition AI systems in education and workplace contexts. No exemption for compliance or training purposes. https://artificialintelligenceact.eu/article/5/

-

Regulation (EU) 2024/1689, Article 6(3) — Derogation conditions for Annex III systems, with critical override: profiling of natural persons always remains high-risk regardless of derogations. https://artificialintelligenceact.eu/article/6/

-

Regulation (EU) 2024/1689, Article 26 — Obligations for deployers of high-risk AI systems: human oversight, data quality, monitoring, logging, notification, and fundamental rights impact assessment. https://artificialintelligenceact.eu/article/26/

-

European Commission, AI Literacy — Questions & Answers (May 2025) — Clarified that directing staff to user manuals is "generally not considered sufficient" and that one-size-fits-all training fails the differentiation requirement. https://digital-strategy.ec.europa.eu/en/faqs/ai-literacy-questions-answers

-

European Commission Decision of 24 January 2024 establishing the European AI Office — Articles 2, 4, 5, 7 establishing the AI Office's mandate, cooperation structures, and relationship with other Commission services. https://digital-strategy.ec.europa.eu/en/policies/ai-office

-

Regulation (EU) 2024/1689, Recital 20 — Legislative intent for AI literacy: equipping people "with the necessary notions to make informed decisions regarding AI systems." https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

-

Regulation (EU) 2024/1689, Recital 44 — Rationale for prohibition of emotion recognition in workplace and education settings: risks to fundamental rights including dignity and non-discrimination. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

-

TwinLadder Assessment Maturity Model v1.0 — Seven-pillar framework for AI literacy and competence assessment, proposing outcome-based measurement and continuous compliance as structural solutions to the Article 4 paradox. CC BY-SA 4.0. https://twinladder.ai/en/research/assessment-maturity-model

-

"The Literacy Paradox: How Article 4 May Prevent the Competence It Demands" — TwinLadder Research, March 2026. Full analysis of the infinite regress, competence degradation paradox, and classification trap in Article 4. https://twinladder.ai/en/research/article-4-literacy-paradox