TwinLadder Assessment Maturity Model v1.0

Seven-Pillar Rubric for AI Literacy and Competence Assessment

Framework Version: 1.0.0 | License: CC BY-SA 4.0 | TwinLadder Research

We built a framework for measuring organisational AI competence. Then we published it on GitHub under a CC BY-SA 4.0 licence. Not because we are generous — because we are serious.

Article 4 of the EU AI Act requires "sufficient AI literacy." It does not define what sufficient means. No regulator has. So the market is defaulting to checkboxes and certificates — the same hollow response that turned GDPR into a cookie banner exercise.

A proprietary standard stays small, validated only by its creator. An open standard compounds. Every consultant who adopts it validates the methodology. Every training provider who references it extends the reach. Every jurisdiction guide contributed by a local expert takes it somewhere we could not go alone.

TwinLadder is the steward of this framework, not the gatekeeper. We authored version 1.0. The community will shape what comes next.

Take the free assessment. Ten minutes. No account required.

Overview

This document defines the detailed maturity model for the TwinLadder Assessment framework's seven pillars: Deployment Competence, Policy & Data Protection, Training, Tools, Evidence, and Governance. Each pillar is evaluated across four maturity stages:

| Stage | Score Range | Label |

|---|---|---|

| 1 | 0-25 | Exploring |

| 2 | 26-50 | Developing |

| 3 | 51-75 | Implementing |

| 4 | 76-100 | Optimizing |

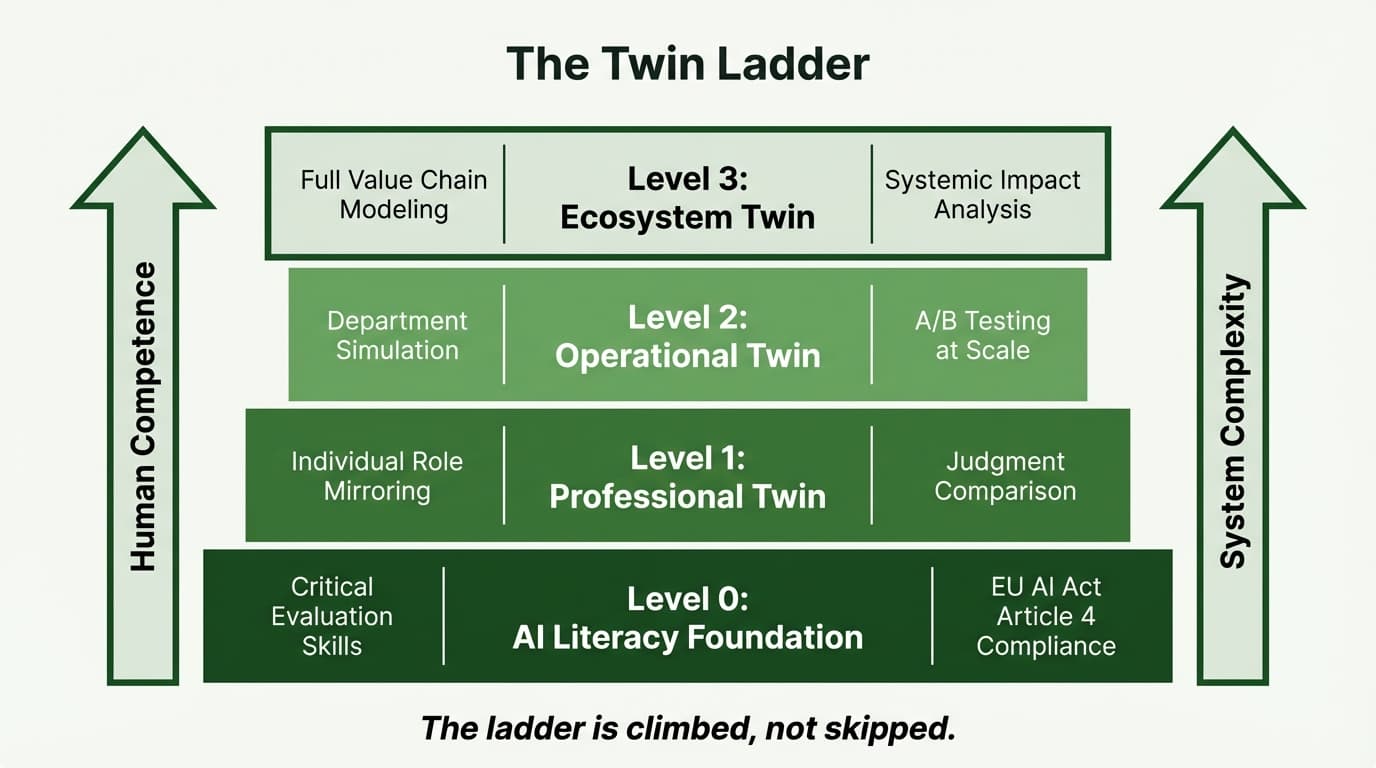

These map to the TwinLadder Framework levels:

- Exploring / Developing = working toward Level 0 (AI Literacy) — the Article 4 compliance floor

- Implementing = achieving Level 1 (Professional Twin) — implied by Article 4's "context" requirement

- Optimizing = progressing through Level 2 (Operational Twin) toward Level 3 (Ecosystem Twin)

The compliance floor (Article 4 baseline) falls at approximately score 50-55. Scores below represent regulatory risk. Scores above represent the competence mission.

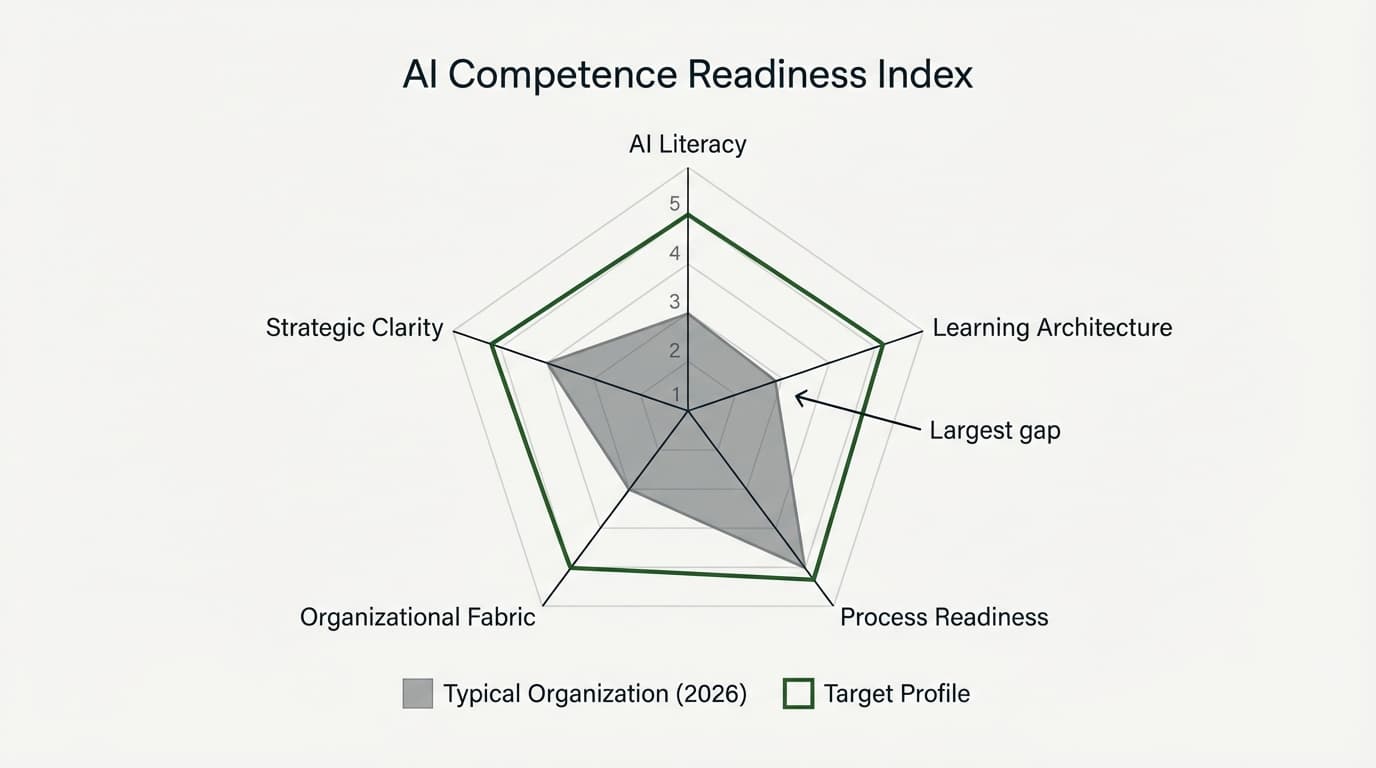

The Competence Paradox

AI simultaneously improves performance and degrades capability. Organisations that adopt AI tools without structured competence development accumulate "competence debt." This model detects and counteracts that paradox.

Compliance vs. Competence

Article 4 establishes a floor, not a ceiling. This model interprets "sufficient" as requiring at minimum the Developing stage (26-50) across all pillars, with the Expert Standard targeting Implementing (51-75).

Competitive Positioning

TwinLadder is the only framework that is simultaneously:

- Competence-specific — centres entirely on individual and organisational AI competence, not governance, risk management, or technical safety

- Article 4-native — purpose-built for the EU AI Act's literacy obligation, not retrofitted from a broader governance model

- Open-source — released under CC BY-SA 4.0; free to adopt, adapt, and redistribute

- Individual + organisational — assesses both per-person competence (Levels 0-3) and organisational maturity (seven-pillar scoring), producing evidence for both Article 4 and ISO 42001 Clause 7.2

- Workflow-based — measures practical competence through task appropriateness, output verification, and risk judgment, not technical knowledge testing

No other framework reviewed — from ISO 42001 to Gartner's five-level model to MITRE's twenty-dimension assessment — combines all five characteristics. Most frameworks treat competence as one dimension among many; TwinLadder makes it the entire methodology.

Pillar 1: Deployment Competence

Weight: 0.15 | Expert Standard: 70

What this measures: Whether staff understand what AI systems are used, can articulate limitations and risks, share organisation-wide understanding of Article 4 obligations, and consider the impact on persons affected by AI-driven decisions.

Level 1 — Exploring (0-25)

No structured approach to AI awareness. Individual staff may use AI informally with no shared understanding.

Indicators:

- No formal communication issued regarding AI use

- Staff cannot name AI tools embedded in their daily software

- No assessment of which roles interact with AI systems

- No one can describe Article 4 requirements

- AI adoption driven by individual experimentation with no management visibility

- No consideration of how AI-driven outputs affect customers, applicants, or other third parties

Threshold: Fewer than 25% of AI-interacting staff can describe what AI systems do or identify limitations. No documented awareness activity.

Level 2 — Developing (26-50)

Organisation has begun addressing awareness, typically triggered by regulatory pressure. Some staff received orientation-level information.

Indicators:

- At least one awareness communication distributed organisation-wide

- Leadership briefed on Article 4 obligations

- Staff in high-exposure roles can name the AI tools they use

- Some staff can describe AI limitations in general terms

- Initial mapping of which roles interact with AI systems

- Some recognition that AI outputs affect external persons, but no structured assessment of impact

Threshold: 50% of AI-interacting roles have received awareness communication. Leadership can articulate Article 4 at a general level.

Level 3 — Implementing (51-75)

Structured AI literacy programme. Staff understand capabilities and limitations in their specific role context.

Indicators:

- Structured literacy programme with documented objectives and role-specific content

- Staff can articulate AI limitations specific to their professional context

- New hires receive AI orientation calibrated to their role

- Organisation-wide Article 4 awareness demonstrable across operational staff

- Staff comfort with AI tools measured by periodic assessment

- AI systems classified by impact on affected persons (customers, applicants, employees, patients); staff in high-impact roles trained on implications for those persons

Threshold: 75% of AI-interacting staff completed structured training. Staff identify risks in own workflows. Article 4 awareness extends beyond leadership.

Level 4 — Optimizing (76-100)

AI literacy embedded in organisational culture. Staff anticipate how emerging capabilities affect their work.

Indicators:

- AI literacy embedded in performance expectations and professional development

- Staff proactively identify anomalous AI outputs without prompting

- Organisation tracks emerging AI developments and communicates relevance

- Peer-to-peer AI knowledge sharing is active and organic

- Periodic literacy assessments inform targeted refresher training

- Affected-person impact is a standing element in AI deployment reviews; staff routinely assess how AI outputs affect third parties before acting on them

Threshold: 90%+ of AI-interacting staff demonstrate contextual literacy through verified assessment. Staff evaluate new AI tools independently.

Pillar 2: Policy & Data Protection

Weight: 0.20 | Expert Standard: 60

What this measures: Formal, enforced policies governing AI use — acceptable use boundaries, prohibited applications, procurement approval, risk classification — integrated with GDPR-compliant data protection for AI workflows.

Level 1 — Exploring (0-25)

No formal AI policy. AI use is ungoverned. Shadow AI pervasive. No data classification for AI tools.

Indicators:

- No written document governs AI use

- Staff use personal AI accounts for work with no oversight

- No distinction between acceptable and prohibited applications

- No process for evaluating new AI tools before adoption

- Confidential information may enter AI tools without controls

- No data classification for AI tools; staff unaware that sending personal data to LLMs may constitute an international transfer under GDPR

Level 2 — Developing (26-50)

AI policy exists in draft or initial form. Some boundaries communicated but enforcement inconsistent. Basic data protection awareness emerging.

Indicators:

- Formal AI use policy drafted/published addressing permitted tools, data, and review

- Acceptable and prohibited use cases defined in writing

- Basic approval process exists for new AI tools

- Staff aware policy exists and can locate it

- Data protection considerations identified

- Basic data classification exists; approved AI tool list considers data processing locations and arrangements; privacy notices do not yet mention AI-assisted processing

Level 3 — Implementing (51-75)

Comprehensive, enforced, regularly reviewed policy. Role-specific acceptable use boundaries. GDPR integration operationalised.

Indicators:

- Policy covers all AI-interacting roles, data classification, review requirements, consequences

- Use cases categorised by risk level

- Formal approval workflow for AI tool procurement

- Policy compliance monitored through regular checks

- Policy undergoes scheduled review (annually minimum)

- DPIA completed for AI tools processing personal data; DPAs in place with AI providers; staff trained on which data categories are permissible per tool

Level 4 — Optimizing (76-100)

Living governance instrument, continuously refined. Policy drives strategic AI adoption. Privacy-by-design embedded.

Indicators:

- Version-controlled policy with change logs and event-driven review

- AI use cases mapped to specific roles, workflows, and decision points

- Risk-based approval with expedited paths for low-risk tools

- Policy effectiveness measured through compliance metrics and incident rates

- Organisation contributes to industry-level policy development

- Privacy-by-design embedded in AI workflows; automated data classification for AI inputs; regular AI data flow audits; Article 22 GDPR compliance verified for automated decisions affecting individuals

Pillar 3: Training (Training & Development)

Weight: 0.20 | Expert Standard: 50

What this measures: Structured, role-specific AI training building workflow-based competence — output verification, risk identification, ethical boundaries, task appropriateness judgment — for all persons interacting with AI systems on the organisation's behalf, including contractors and outsourced providers.

Level 1 — Exploring (0-25)

No structured AI training. Staff self-taught through trial-and-error.

Indicators:

- No formal training programme for any role

- Staff entirely self-taught on AI tools

- No role-specific training materials

- AI competence neither assessed nor tracked

- Any training is generic technical content, not workflow-based

- Contractors and outsourced service providers receive no AI literacy training or requirements

Level 2 — Developing (26-50)

Initial training provisions. Some structured content exists, beginning to shift to practical competence.

Indicators:

- At least one structured training module available

- Content addresses practical skills — verification, limitation recognition

- Training available for high-exposure roles

- Self-assessment mechanisms exist

- Training completion recorded

- Some awareness that contractors need AI training, but no formal inclusion in programme

Level 3 — Implementing (51-75)

Comprehensive role-specific programme. Workflow-based methodology. Competence assessed through practical evaluation.

Indicators:

- Role-specific programmes for all major AI-interacting functions

- Workflow-based approach with scenarios from actual practice

- Competence assessed through practical evaluation, not just knowledge testing

- Materials updated quarterly

- Continuous learning infrastructure beyond initial training

- Contractors, temporary workers, and outsourced providers included in AI literacy requirements; outsourcing contracts include AI competence clauses

Level 4 — Optimizing (76-100)

Continuously optimised based on competence data. Self-sustaining learning ecosystem.

Indicators:

- Validated competence framework with measurable benchmarks per role

- Training effectiveness measured through outcome metrics

- Periodic reassessment with personalised development paths

- Internal AI competence certification recognised as meaningful

- Deliberate practice and AI-free assessments address competence paradox

- Third-party competence verification integrated into vendor management; AI literacy requirements in procurement specifications

Pillar 4: Tools (Technical Infrastructure)

Weight: 0.15 | Expert Standard: 55

What this measures: Control over AI technical infrastructure — tool inventory, human oversight mechanisms, data protection compliance, risk classification.

Level 1 — Exploring (0-25)

No visibility into AI tools. Shadow AI prevalent. No oversight mechanisms.

Indicators:

- No inventory of AI tools exists

- Staff use consumer-grade AI (personal accounts) for work including sensitive info

- No human review requirement for AI outputs

- No DPIAs for AI tools

- No risk-level distinction between AI tools

Level 2 — Developing (26-50)

Begun documenting AI tool landscape. Partial inventory. Some review processes.

Indicators:

- Partial AI tool inventory covering primary tools known to management

- Human review required for high-risk AI outputs

- Basic data protection checks for primary tools

- Basic risk classification (approved vs not-yet-assessed)

- Some controls to limit unapproved tools

Level 3 — Implementing (51-75)

Comprehensive maintained inventory with risk classifications. Systematic oversight.

Indicators:

- Complete tool inventory with ownership, purpose, data classification, risk, review dates

- Systematic human oversight defined by risk level

- DPIAs completed for all relevant tools

- Tools classified by risk with corresponding controls

- Technical controls complement policy (enterprise accounts, DLP, access controls)

Level 4 — Optimizing (76-100)

Strategic AI tool management. Live registries. Continuous monitoring. Proactive evaluation.

Indicators:

- Live AI tool registry with usage metrics as management tool

- Audit trails linking AI outputs to human review decisions

- Continuous performance monitoring including accuracy and drift detection

- AI-specific incident response process

- Proactive risk-based evaluation with post-deployment monitoring

Pillar 5: Evidence (Evidence & Documentation)

Weight: 0.15 | Expert Standard: 40

What this measures: Whether the organisation can demonstrate compliance if audited — training records, competence assessments, policy documents, incident logs, decision trails, and proportionality reasoning.

Level 1 — Exploring (0-25)

No systematic documentation. Nothing to show a regulator.

Indicators:

- No centralised repository for AI compliance documentation

- Training completions not tracked

- AI-related decisions not documented

- Incidents not logged

- No evidence portfolio, no audit preparation

Level 2 — Developing (26-50)

Begun building evidence base. Training records captured for recent activities. Some documentation exists.

Indicators:

- Training completion records exist for recent activities

- AI policy document maintained in retrievable format

- Some incidents documented, though inconsistently

- Basic evidence could be assembled if requested — scattered but extant

- Retention policy developing

Level 3 — Implementing (51-75)

Comprehensive audit-ready evidence portfolio. Systematic capture and retrieval.

Indicators:

- Centralised evidence portfolio with sections per compliance pillar

- Training records include competence assessment results

- Structured incident documentation (what, when, tool, impact, corrective action)

- Timestamped evidence with version information

- Defined retention policy aligned with regulatory expectations

- Proportionality reasoning documented — a record of why the organisation's AI literacy measures represent its best effort given available resources, organisational size, and AI deployment profile

Level 4 — Optimizing (76-100)

Living compliance instrument. Automated capture. Evidence drives continuous improvement.

Indicators:

- Automated evidence capture (training completions, competence scores, usage data)

- Independent verification elements (external audits, third-party certifications)

- Evidence analysis drives governance decisions (trends, gaps, effectiveness)

- Lessons-learned process feeds into policy and training updates

- Portfolio structured for regulatory engagement

- Proportionality reasoning reviewed annually and updated when resources, headcount, or AI deployment profile changes

Pillar 6: Governance (Ethical & Responsible Use)

Weight: 0.15 | Expert Standard: 45

What this measures: Accountability structures, ethical oversight, regulatory monitoring. The capstone pillar ensuring all other pillars function as an integrated system.

Level 1 — Exploring (0-25)

No one responsible for AI governance. No ethical review. No regulatory monitoring.

Indicators:

- No designated AI governance responsibility

- Ethical considerations not part of AI deployment decisions

- No monitoring of AI regulatory developments

- No escalation path for ethical concerns

- No coordination between compliance activities

Level 2 — Developing (26-50)

Governance responsibility assigned informally. Some ethical consideration. Some regulatory awareness.

Indicators:

- Named individual responsible for AI governance (even as additional duty)

- Basic ethical checklist for significant AI decisions

- Monitoring of major regulatory developments

- Mechanism to raise ethical concerns

- Some cross-pillar coordination through governance lead

Level 3 — Implementing (51-75)

Dedicated governance structure. Ethical review integrated. Systematic regulatory monitoring.

Indicators:

- Dedicated AI governance team/committee with defined mandate and cadence

- Ethical review process for AI deployments above risk threshold

- Systematic regulatory monitoring with governance actions

- Cross-pillar coordination ensuring coherence

- Governance reporting to senior leadership/board

- For organisations operating in multiple EU jurisdictions: governance structures account for national implementation variations (e.g., German works council co-determination rights under BetrVG Section 87 for AI systems monitoring employee behaviour or performance)

Level 4 — Optimizing (76-100)

Strategic governance function with board visibility. Ethics review has authority. Sector leadership.

Indicators:

- Board-level AI governance reporting with KPIs

- Ethics review with decision-making authority (can block deployments)

- Organisation contributes to sector-level governance standards

- Proactive regulatory intelligence anticipating changes

- Governance enables responsible innovation within clear guardrails

- Jurisdiction-specific compliance requirements mapped and monitored; national enforcement practice differences integrated into governance decisions

Pillar Weight Rationale

The seven pillars are weighted as follows:

| Pillar | Weight |

|---|---|

| Deployment Competence | 0.15 |

| Policy & Data Protection | 0.20 |

| Training | 0.20 |

| Tools | 0.15 |

| Evidence | 0.15 |

| Governance | 0.15 |

Why approximately equal weighting?

All seven pillars are necessary; none alone is sufficient. An organisation with excellent training but no policy is not compliant. An organisation with comprehensive governance but no evidence cannot demonstrate compliance. Article 4 does not prioritise any dimension over others — it requires "measures" (plural) addressing literacy holistically.

Training and Policy & Data Protection receive slightly higher weight (0.20 each) because they represent the most directly actionable compliance measures. Training is the primary mechanism for building the "sufficient level of AI literacy" that Article 4 demands. Policy & Data Protection defines the boundaries within which AI may be used and addresses the GDPR intersection that European organisations cannot ignore.

These weights reflect the initial calibration of the standard. They may be adjusted by the Standard Governance Board based on enforcement practice data, national competent authority guidance, and empirical evidence from assessment deployments. Any weight adjustment will follow the public comment process described in the Versioning & Governance section.

Cross-Pillar Dependencies

Dependency Matrix

Pillars do not exist in isolation. Maturity in one pillar often depends on maturity in another. The following matrix identifies the primary dependencies:

| Pillar | Depends On | Rationale |

|---|---|---|

| Deployment Competence | Tools | You cannot build deployment competence for AI systems you have not inventoried. Tool discovery precedes literacy. |

| Policy | Deployment Competence | Policy requires understanding what AI is used and how — deployment competence informs policy design. |

| Policy | Tools | Policy must reference specific tools and data flows; a policy without a tool inventory is abstract. |

| Training | Deployment Competence | Training content derives from understanding of what systems exist and what risks they present. |

| Training | Policy | Training teaches the rules; those rules must exist in policy first. |

| Evidence | Tools | You cannot document AI usage you do not know exists. Tool inventory is the evidence foundation. |

| Evidence | Training | Evidence of competence requires a training programme that produces assessable results. |

| Governance | Policy | Governance enforces and reviews policy; without policy, governance has nothing to govern. |

| Governance | Evidence | Governance decisions require evidence of current state; without evidence, governance is uninformed. |

Anomaly Detection Rules

When pillar scores are inconsistent with the dependency matrix, the assessment should flag the anomaly for review. Inconsistent scores often indicate either measurement error or a structural gap that undermines the higher-scoring pillar.

| Anomaly Pattern | Interpretation | Action |

|---|---|---|

| Tools < 25, Evidence > 50 | Cannot document what you do not know exists | Verify Evidence pillar responses; likely overestimated |

| Policy < 25, Training > 50 | Training without policy boundaries is unanchored | Assess whether training content actually references enforceable standards |

| Deployment Competence < 25, Policy > 50 | Policy exists but no one understands the landscape it governs | Policy may be aspirational rather than operational |

| Training < 25, Evidence > 50 | Evidence of competence without a training programme | Evidence may consist of policy documents only, not competence records |

| Tools < 25, Governance > 50 | Governing AI without knowing what AI is deployed | Governance may be performative rather than substantive |

Tolerance threshold: A difference of more than 40 points between dependent pillars (where the dependency scores lower) should trigger manual review. A difference of more than 50 points should be flagged as a likely measurement error.

The Lowest-Pillar Principle

An organisation's compliance posture is only as strong as its weakest pillar. Sophisticated training cannot compensate for absent governance. Comprehensive evidence cannot substitute for missing policy. This principle has practical consequences:

- An organisation scoring 80 across five pillars but 20 on Tools has a systemic blind spot — it does not know what AI systems are in use, making all other pillars unreliable.

- An organisation scoring 70 across five pillars but 15 on Evidence cannot demonstrate compliance — regardless of actual competence, it will fail a regulatory inquiry.

- An organisation scoring 65 across five pillars but 10 on Governance lacks accountability — no one is responsible for ensuring the other pillars function as a system.

The weighted overall score may mask these vulnerabilities. The Lowest-Pillar Principle ensures they surface.

Certification Requirements

Certified status requires overall weighted score >= 75 AND every pillar >= 50.

TwinLadder Level Mapping

| TwinLadder Level | Score Range | Regulatory Status |

|---|---|---|

| Level 0 — AI Literacy | 40-55 | Article 4 compliance floor |

| Level 1 — Professional Twin | 55-70 | Implied by "context" requirement |

| Level 2 — Operational Twin | 70-85 | Strategic advantage |

| Level 3 — Ecosystem Twin | 85-100 | Market leadership |

Scoring Layers

The maturity model produces data at three levels of abstraction, each designed for a different audience and decision context.

Strategic Layer (Board / C-Suite)

Audience: Board members, CEO, COO, General Counsel Cadence: Quarterly reporting; event-driven updates on material changes

Metrics:

- Overall maturity score — single weighted number (0-100) representing organisational AI competence posture

- Compliance risk exposure — traffic-light classification (Red: below compliance floor on any pillar; Amber: within 10 points of compliance floor; Green: all pillars above floor with margin)

- Investment priorities — rank-ordered list of pillars requiring budget allocation, derived from gap analysis

- Sector benchmark position — percentile ranking against peer organisations in same sector and size band (when benchmark data is available)

- Trend direction — quarter-over-quarter change in overall score and per-pillar scores

The Strategic Layer answers: Are we compliant? Where should we invest? How do we compare?

Tactical Layer (Department Heads)

Audience: Department heads, HR directors, compliance officers, CTO/CIO Cadence: Monthly review; post-assessment update

Metrics:

- Per-pillar scores — six individual scores with level classification (Exploring / Developing / Implementing / Optimizing)

- Gap analysis — for each pillar, the specific indicators not yet satisfied and the distance to the next maturity level

- Training recommendations — prioritised list of training interventions by department and role, derived from pillar scores and dependency analysis

- Implementation roadmap — sequenced action plan respecting cross-pillar dependencies (e.g., complete tool inventory before attempting evidence portfolio)

- Anomaly flags — any cross-pillar inconsistencies detected by the dependency matrix

The Tactical Layer answers: Where exactly are the gaps? What should we do next? In what order?

Operational Layer (Individual)

Audience: Individual professionals, team leads, learning and development coordinators Cadence: Per-assessment; continuous for learning path tracking

Metrics:

- Personal TwinLadder level — individual classification (Level 0: AI Literacy, Level 1: Professional Twin, Level 2: Operational Twin, Level 3: Ecosystem Twin)

- Learning path — personalised sequence of modules addressing individual competence gaps, calibrated to role and current level

- Competence verification results — per-skill assessment outcomes (workflow-based scenario performance, not quiz scores)

- Progress tracking — movement toward next level with specific milestones identified

- Peer comparison — anonymised position within role cohort (optional, organisation-configurable)

The Operational Layer answers: Where am I? What should I learn next? Can I demonstrate competence?

Design Principles

- Comfort over code. Indicators measure practical competence, not technical AI knowledge.

- Workflow-based assessment. Anchored in observable workplace practices.

- Compliance as floor, competence as mission. Distinguishes meeting Article 4 from building advantage.

- Competence paradox addressed. Higher levels specifically counter AI dependence risks.

- Proportionality. Maturity should be proportionate to AI risk profile.

Appendix A: Article 4 Compliance Mapping

The following matrix maps each operative phrase of Article 4 to the TwinLadder pillars it engages. P indicates the primary pillar; S indicates a secondary pillar.

| Article 4 Phrase | Deployment Competence | Policy & Data Protection | Training | Tools | Evidence | Governance |

|---|---|---|---|---|---|---|

| "Providers and deployers of AI systems" | P | S | ||||

| "shall take measures" | S | P | ||||

| "to ensure, to their best extent" | S | P | S | |||

| "a sufficient level of AI literacy" | P | S | ||||

| "of their staff and other persons dealing with the operation and use of AI systems on their behalf" | S | P | S | |||

| "taking into account their technical knowledge, experience, education and training" | S | P | ||||

| "the context in which the AI systems are to be used, and considering the persons or groups of persons on whom the AI systems are to be used" | P | S | S | S |

Key findings from the mapping:

- Every pillar is engaged by at least two operative elements. No pillar is redundant.

- The heaviest regulatory burden falls on Deployment Competence (2 primary mappings), Training (2 primary), Evidence (1 primary, enabling factor throughout), and Governance (1 primary, enabling factor throughout).

- The "staff and other persons" phrase extends the obligation beyond employees to contractors, consultants, temporary workers, and outsourced service providers.

- The "context of use" and "affected persons" phrases make one-size-fits-all training legally insufficient.

For the full line-by-line analysis including ambiguity notes, GDPR intersection mapping, and compliance floor justification, see the companion document: EU AI Act Article 4 — TwinLadder Assessment Maturity Model Mapping.

Appendix B: Versioning & Governance

Document Version

| Field | Value |

|---|---|

| Version | 1.0.0 |

| License | CC BY-SA 4.0 |

| Published | 2026-03-08 |

| Next scheduled review | August 2026 (aligned with Article 4 enforcement commencement) |

Review Cadence

- Annual major review (x.0.0): structural changes to pillars, weights, scoring methodology, or maturity level definitions. Triggered by significant enforcement practice developments, Commission delegated acts, or harmonised standard publication.

- Quarterly minor adjustments (x.y.0): indicator refinements, new assessment questions, benchmark data updates, and editorial corrections. Triggered by user feedback, deployment data, or minor regulatory guidance.

- Patch updates (x.y.z): typographical corrections, formatting, and non-substantive clarifications.

Standard Governance Board

The TwinLadder Assessment Maturity Model is governed by a Standard Governance Board with the following structure:

- Permanent chair: TwinLadder (as originating organisation and standard maintainer)

- Board composition: Representatives from adopting organisations, legal practitioners, data protection officers, and academic researchers. Composition to be formalised as adoption grows.

- Decision authority: Major version changes require Board approval. Minor adjustments may be published by the permanent chair with Board notification.

Public Comment Process

Major version changes (x.0.0) follow a public comment process:

- Draft publication — proposed changes published with rationale and impact assessment

- Comment period — minimum 30 days for public comment via the project repository

- Response document — all substantive comments addressed in a published response

- Final publication — updated version published with change log

Repository

The canonical version of this document and the associated assessment methodology are maintained at the TwinLadder project repository. Contributions, issues, and proposed changes are welcome through the standard open-source contribution process.

This document is the open-source standard for AI competence assessment under the TwinLadder framework. It should be reviewed and updated as Commission guidance, enforcement practice, and harmonised standards develop.